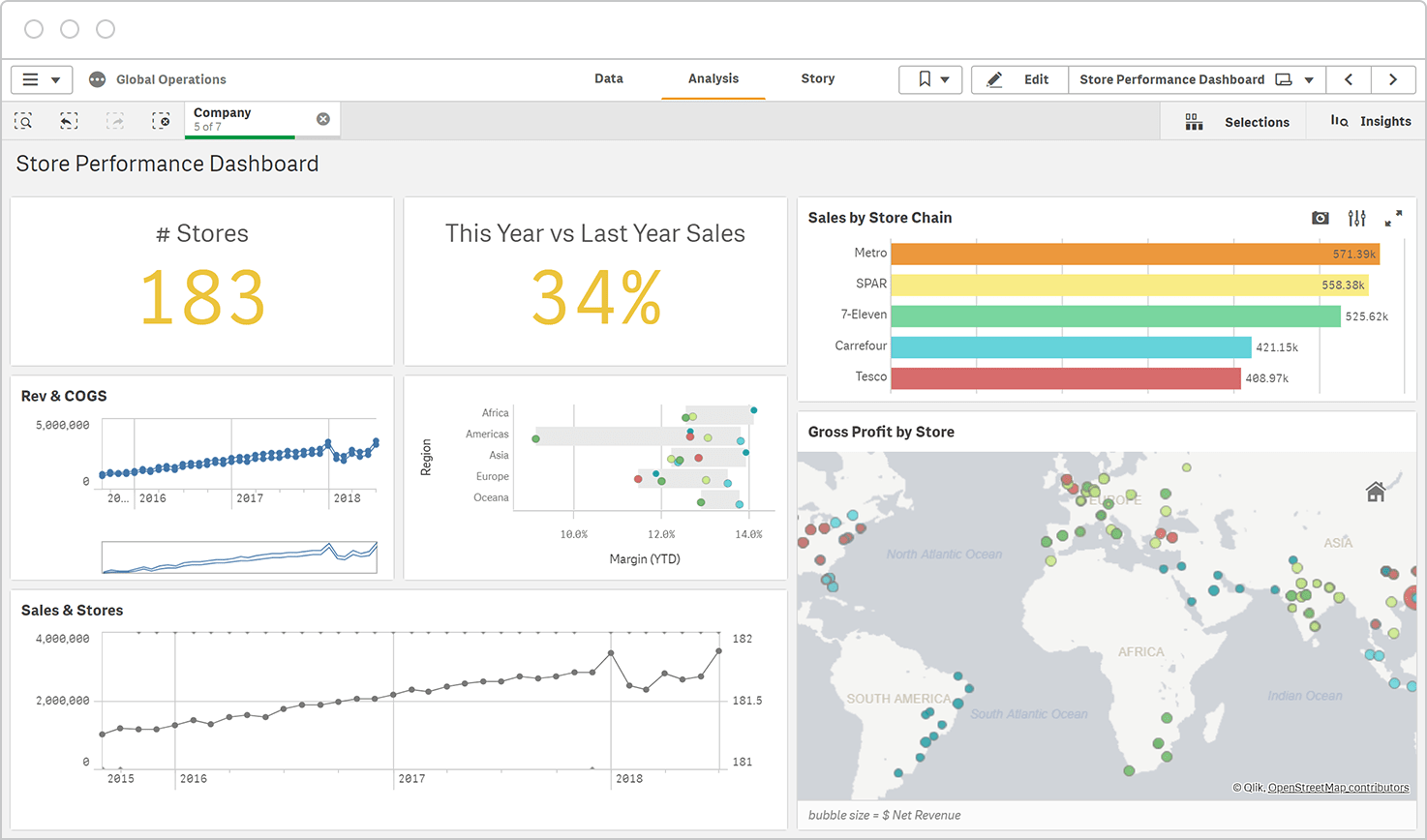

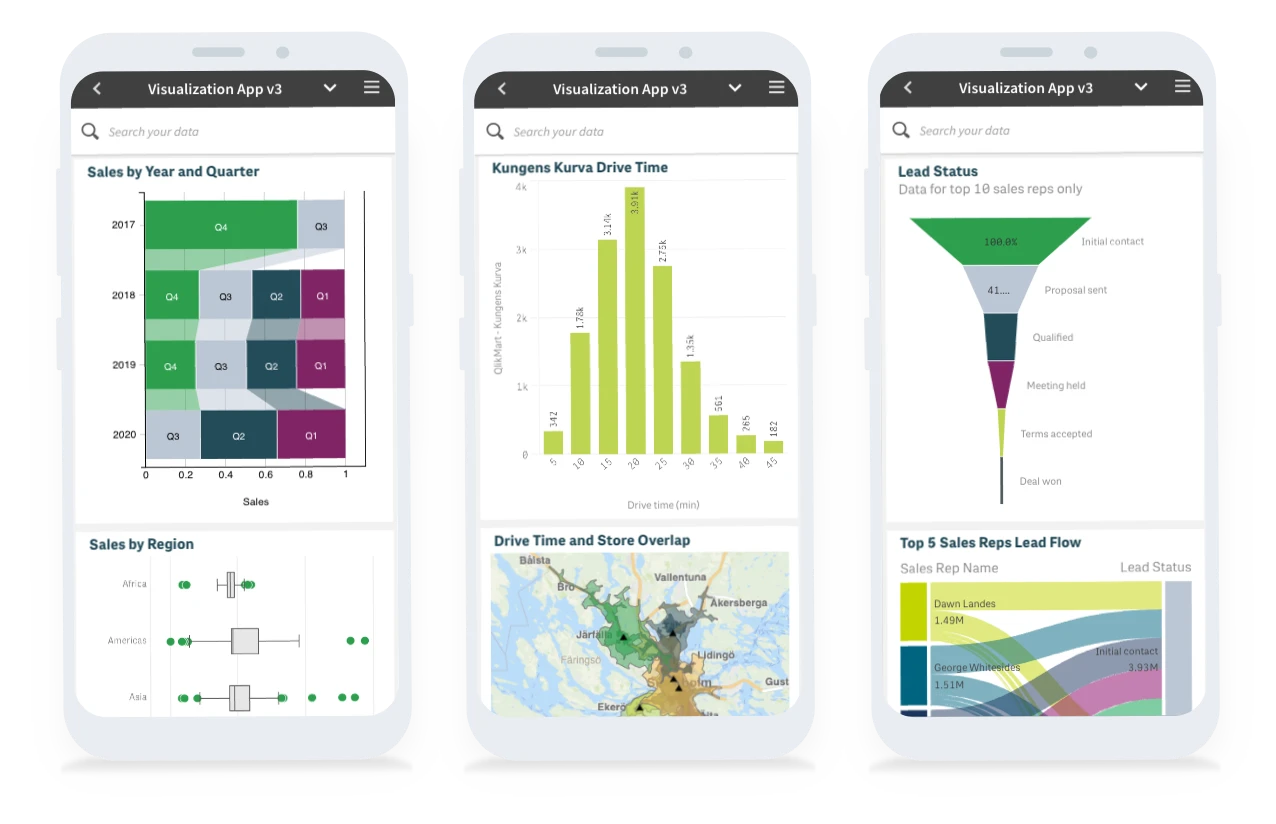

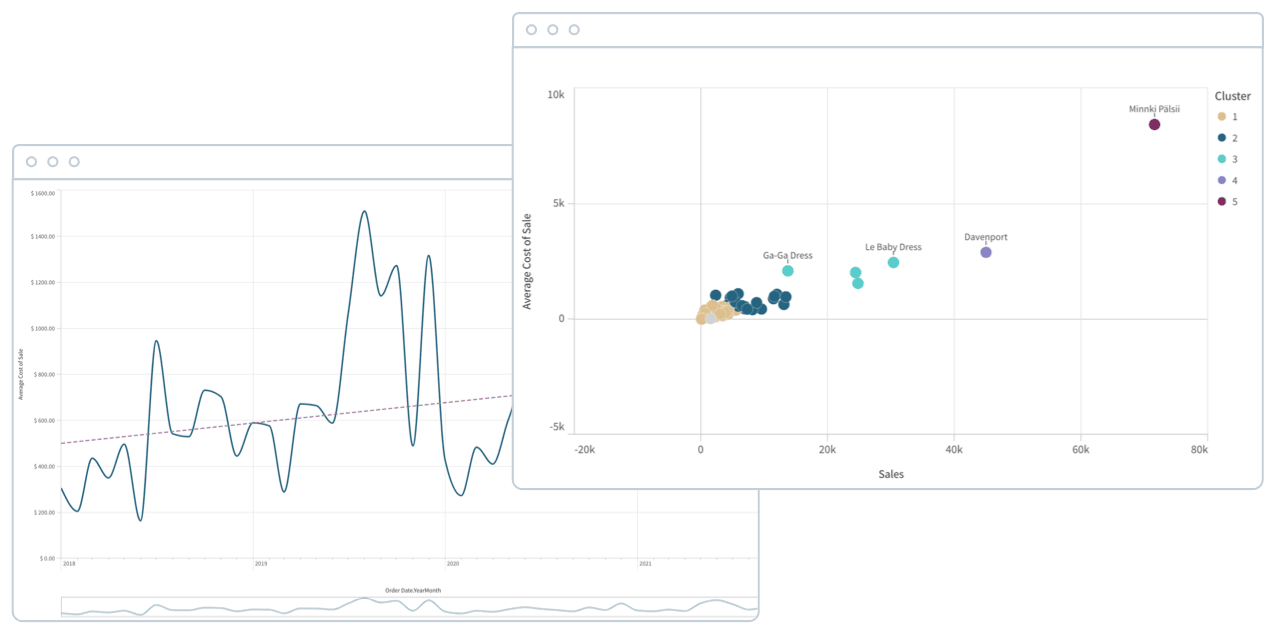

Self-service Visualization

Easily combine, load, visualize, and explore your data, no matter how large (or small). Ask any question and follow your curiosity. Search, select, drill down, or zoom out to find your answer or instantly shift focus if something sparks your interest. Every chart, table, and object is interactive and instantly updates to the current context with each action. A broad library of world-class, smart visualizations convey meaning, reveal the shape of data, and help pinpoint outliers. And get faster time to insight with assistance from Insight Advisor for auto-generated analysis, chart recommendations, and data combination.